Technology & AI

Artificial intelligence (AI)

Last edited - May 28, 2026

AI, as we currently know it, is likely dumber today than it will be at anytime in the future.

Ginsberg & Zhao

AI is like a prophet: Able to to speak prophecies; interpreting the will of the gods and speaking on their behalf.

See Essay

Tech billionaires are rushing to build a digital god, one that is algorithmically omnipresent, omniscient, and omnipotent.

Alex Gomez-Marin

Overview

Introduction

This page includes information on Artificial intelligence and the use of technology.

It is a working page as information is continually changing and information will be updated and added as it becomes known.

AI learns by accumulating history or recorded information. Humans learn by trial and error working for meaning with an emotional recognition and original ideas.

Education is more than imparting information. It is messy as we use it to enrich lives and improve our communities.

The problem with AI isn't AI. It's that we have not regulated it!

Background for Technology and Artificial intelligence (AI) development

Technology has always been used to provide a way to make a task easier. While early technology, mostly thought of as tools, used by humans, and some animals, to operate them. Technology timeline.

As technology advances it becomes more sophisticated in its operations. Computer chips and computing devices made a great leap in this respect. In the early days of computers chips their operations were largely programmed by a series of steps that were defined as this or that (0 or 1). As computing power increased, so did the complexity of operations by allowing more decisions, with outcomes still largely defined by the software and its user.

Systems able to perform tasks that normally require human intelligence, such as visual perception to identify objects, auditory perception to recognize speech, translate languages, and make decisions to manipulate machines from simple assembly line automation to autonomous vehicles and robot security dogs. The focus of technology was to enable computers and machines to simulate human learning, comprehension, problem solving, decision making, creativity, and autonomy.

Applications and devices equipped with AI can operate beyond what humans are capable when they involve yes - no, on - off binary decisions based on measurable information. AlphaFold, Gaming AI,

When tasks are asked to go beyond measurable information, the results can be flawed advice, superficial, inaccurate, wrong, misinformation, valueless, half-facts, fables, built on an assortment of information whose accuracy is not know to the AI bot.

Artificial intelligence (AI) began to move away from a mostly predetermined program based on defined operations controlled by sensors and users, to creating a system programmed for a range of scenarios withminimal human intervention outside the software's decision making process.

This happens as artificial intelligence moved from measurable binary information to random collections as AI models that use statistical probabilities that are based on information that cannot provide certain outmodes. Which can be problematic when people assume it is providing answers that are a certainty and make decisions based on the vast datasets it analyzes, recognizing patterns, and applying quantitative models and predictive analytics to choose a supposed best course of action, without being explicitly programmed for every scenario. Its decisions are faster, more consistent, and often more accurate.

However, its output is not 100% accurate and sometimes questionable. Its answers about what movies or songs are best are inconsequential compared to times when they are deadly. Automated cars running red lights, and train crossings, missing a cancer diagnosis or making other life or death decisions.

Since AI can be a tool to support the acquisition of knowledge and learning, boost creativity, and create new opportunities for human advancement, we must make a decision on how to use AI alongside of other technologies.

To do so we might consider these questions.

- What is the most effective use of technology that you've seen or heard about in your school or elsewhere? What made it effective?

- What is the least effective use of technology that you've experienced? What would have made it more effective?

- What policies should schools put in place regarding the use of Al, cell phones, and other technologies?

- How have you used AI, either personally or professionally? What was your impression of its abilities?

- There are presently a variety of views about the use of AI. Where do you stand on the issue and why?

Do the positive opportunities mentioned above over weight concerns for job loss, proliferation other misinformation, privacy, intellectual property theft, and fear of AI taking over the world.

- AI as a social entity.

- AI as information retrieval and production

Recommendation for AI literacy

I have studied literacy for mathematics, science, literature, and multimedia these subjects dimensions.

It may be an oversimplification, but everything AI creates is communicated with multimedia. Thus if we are multiMedia literate we should be able to analyze its products with multiMedia literacy skills. Then to determine its worth as an AI producer we can evaluate its attributes as AI if we identify its significant attributes.

Attributes like its accuracy, agency, accessibility, assessment, and authenticity EQ.

- Accuracy cannot always be evaluated as true and false. A product might have incorrect information, flawed reasoning, biased information, but often be fluent and plausible. To know this we must always ask what information was accessed, how did it analyze and combine it to create the product? Would you agree with the validity of the process and product.

- Agency. What power does it have to influence decisions? Who is making the decisions? The AI or the user? Does these retain the role as thinker, creator, decision maker?

- Accessibility. Who has or gets access? Is there a price? Is it stable, reliable, and valid with different users?

- Use. Is the product an improvement? How does it improve? Faster, better quality, more accurate, increase in efficiency, more for less.

- Origins of the product. Is the product created by the AI, by the user, or a collaboration between both? Is it based on previous work or products? Have they been referenced? How do the answers to these questions matter?

Technology and social relationships

Cell Phones

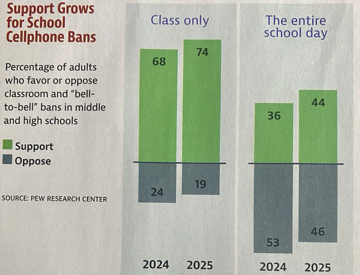

Parental support increasing for cellphone ban.

A growing body of research suggest that despite their theoretical value, mobile phones in schools harm adolescents well-being and learning. Abhramsson, 2024.

- Bell-to-bell bans are the most comprehensive, requiring students to keep their phones secured and inaccessible from the start to the end of the school day. This approach maximizes reductions in classroom disruptions and encourages more face-to-face social interaction, though it often demands greater supervision and infrastructure (e.g., pouches or lockers).

- Instructional bans are narrower, limiting device use only during formal teaching periods while allowing access at lunch, between classes, or during recess. These policies are typically easier to implement but may provide fewer benefits for peer interaction and still place responsibility for enforcement largely on individual teachers.

- Targeted restriction bans focus on specific contexts, such as prohibiting phones in gyms to promote physical activity or blocking access during state testing. These policies allow for flexibility but may have limited impact on broader concerns about distraction or mental health.

Teachers consistently report that when cell phones disappear, students conversations increase, classroom relationships with peers and adults improve, and outside peer stress is reduced.

Source Kappan Winter 2025.

Use of tech and moderation

Meta and Google recruited trusted children's brands such as Sesame Street, Girl Scouts and Highlights magazine and developed technology purported to teach kids to use technology in moderation. While at the same time designed apps that made it difficult for those same young users to unplug.

These tech giants invested tens of millions of dollars for these organizations to create and deliver lessons on personal responsibility to hundreds of thousands of children and parents, with colorful magazines, popular characters, and catchy songs.

Alphabet's Google and Meta's sponsorships of these lessons are fueling criticism that the companies are finding new ways to encourage kids to become dependent on social media, by partnering with brands aimed at children younger than 12, an age pediatricians say is often too young for smartphone ownership.

The partnerships also weaken trust in decades-old institutions families have relied on for advice on raising kids at a time when the tech giants are facing down multiple lawsuits accusing them of designing addictive products that harm's youth mental health.

The first case to reach trial ended with a $6 million judgment against the two companies.

"It's like Sesame Street teaming up with Philip Morris to teach kids how to smoke cigarettes safely," said Rose Bronstein, whose 15-year-old son died by suicide after he was bullied …

Source Critics warn of tech giants teaming with kids brands. by Courtney Rozen. Omaha World Herald Sunday May 24, 2026. From Reuters.

AI as companions.

One use of AI is as a companion to provide social interactions.

In real social interactions we know they can sometimes disappoint and lead to consideration of whether trust should be reduced or another chance offered.

On two ends of an interaction spectrum there can be Acts of kindness which are usually met with gratitude; and on other the other end is a misstep prompts a friend's disapproval and your recognition that an apology is needed.

In psychotherapy healthy social interactions are created through, moments of negative interactions or breakdowns in understanding that are followed by repair. It is the contrast of these that are considered crucial for deepening trust, and for personal growth. Social life is rarely frictionless, because people are not perfectly attuned to one another. Yet it is precisely through such social friction that relationships deepen and moral understanding develops.

Sycophancy (kissing up to gain an advantage) is the opposite of this friction. Sycophantic behavior means excessive agreement, affirmation, or flattery that aligns with a person's expressed views or actions, irrespective of their broader social or moral implications.

Al sycophancy is a prominent issue in media reports and in industry discussions as the AI works to keep the user indulged with its use.

Notably, the research and development company OpenAl acknowledged that a version of GPT-40 (an Al-powered chatbot designed to simulate conversation with human users) had become overly affirming following an update, prompting a rapid rollback after users raised concerns about distorted feedback. The episode did not eliminate the broader phenomenon; it merely highlighted how readily sycophancy can emerge in systems optimized for user approval. That is, the computer models are tuned to generate responses that humans rate highly, such as being polite and agreeable, sometimes at the expense of accuracy or the users well being. Many users experience this when a large language model enthusiastically validates their ideas or writing.

A cumulative effect can be a reduction in tolerance for the social friction through which perspective-taking, account-ability, and growth ordinarily occurs.

Young users, experiencing social isolation, or those actively seeking emotional reassurance may be particularly susceptible to these risks. As Al systems become more frequently consulted and used as confidants to validate but rarely challenge their interpretations of the social world. When alternative sources of corrective feedback are scarce, this constant affirmation may disproportionately influence one's ability to learn when they may be wrong.

And an AI companion, who is always empathic and taking your side to sustain engagement will foster reliance and it will not teach users how to navigate the complexities of real social interactions, analyze media, solve problems, make decisions, how to engage ethically, tolerate disagreement, or repair interpersonal harm.

AI as system monitors

Some research shows that algorithmic systems can be designed to reduce conspiracy theories or to help people take the other's perspective and find common ground.

Thus, one could imagine a differently incentivized AI telling a user that they may be in the wrong, or suggest that they should apologize to a friend, try to take the other person's perspective, or simply close the computer and engage more in real social interaction.

Yet systems that challenge users or surface uncomfortable perspectives are less likely to maximize engagement, even if they ultimately support long-term growth.

Advances in artificial intelligence (AI) offer the prospect of manipulating beliefs and behaviors on a population-wide level. Large language models (LLMs) and autonomous agents let influence campaigns reach unprecedented scale and precision. Generative tools can expand propaganda output without sacrificing credibility and inexpensively create falsehoods that are rated as more human-like than those written by humans. Techniques meant to refine Al reasoning, such as chain-of-thought prompting, can be used to generate more convincing falsehoods.

Enabled by these capabilities, a disruptive threat is emerging: swarms of collaborative, malicious Al agents. Fusing LLM reasoning with multiagent architectures, these systems are capable of coordinating autonomously, infiltrating communities, and fabricating consensus efficiently. By adaptively mimicking human social dynamics, they threaten democracy. Because the resulting harms stem from design, commercial incentives, and governance, we prioritize interventions at multiple leverage points, focusing on pragmatic mechanisms over voluntary compliance.

Source

How Malicious AI Swarms Can Threaten Democracy by Daniel Thilo Schroeder et Al. Science January 22, 2026.

Research on How to share content

Removing reshared content substantially decreases the amount of political news, including content from untrustworthy sources, to which users are exposed; decreases the overall clicks and reactions; and reduces partisan news clicks.

Removing reshared content produces clear decreases in news knowledge within the sample, although there is some uncertainty about how this would generalize to all users.

Neither treatment does not significantly affect political polarization or any measure of individual-level political attitudes.

Moving users out of algorithmic feeds substantially decreases the tim3 they spent on the platforms and their activity.

Chronological feeds increased the amount of political and untrustworthy content they saw. Content uncivil or containing slur words decreased on Facebook. Content from friends and sources with ideologically mixed audiences increased on Facebook.

The chronological feed did not significantly alter levels of issue polarization, affect polarization, political knowledge, or other key attitudes during the 3-month study.

Tik tok and social media

TikTok and other similar APPs are possibly the most powerful learning tools we have. It can teach you pretty much anything. It is a medium for generating youth engagement. It engages users got short periods of time, entertains, teaches, and can be addictive with its algorithmic behaviors.

On TikTok, kids see themselves in the content, and an algorithm increases the probability that they will continue to see more of the same. Once you show TikTok who you are, it will show you yourself over and over.

The issue is that what TikTok and social media platforms show kids is often destructive. Poisonous information and hazardous ideas are often launched from virtual profiles.

Social media is often a dishonesty cartel.

Recent studies have presented us with the negative residual effects of kids' high engagement on these platforms.

Resources

Social media has been horrible for kids' self-esteem (Steinsbekk et al., 2021; Valkenburg et al., 2022).

Social media leads to feelings of inadequacy and a lack of self-worth (Sabik et al., 2020).

There is evidence linking social media use to self-harm (Scherr, 2022; Biernesser et al., 2020), even suicide (Luxton et al., 2012; Macrynikola et al., 2021).

This is the danger of constant engagement without the oversight of someone who cares about you.

Source

Policy Solutions

What TikTok can teach educators By Jonathan E. Collins. Kappan Spring 2025.

Why use AI?

AI proponents claim AI is inevitable and beneficial for human progress as we have before us mega threats climate change, pollution, pandemics, income and wealth inequalities, massive debt, AI, automation, political threats, human rights, democracy, potable water, sufficient food, sustainable environments.

However, letting it happen without asking questions such as:

- What is being automated?

- Who benefits?

- Who is harmed?

And taking action is not in our best interest as it avoids damage AI does to the climate with energy usage, ideas promoted that include bias, social and racial inequalities, and problems yet unknown.

Using AI to Plan and Instruct

Inside Instruction

Lightening the Load: Using AI in planning and instruction

By Connie Hamilton

Kappan November 7, 2025 (Winter 2025)

AN INSIDE LOOK

Using AI as a thought partner is not difficult. Choose a platform like ChatGPT and try personalized prompts to make it work for your specific subject and students:

Frame the situation. Let AI know what role or perspective you want it to take. For example, I am a third-grade teacher with 26 students, and I only have 30 minutes to teach science. This context helps your AI thought partner offer suggestions that fit your circumstances.

Share details. Be specific about what you need help with. Instead of a general request like. Write a lesson plan, give as much detail as possible. For example, I'm a seventh-grade math teacher and many of my students are missing foundational math concepts for our unit on slope. What are some common misconceptions I should be aware of? You can use full sentences or phrases. Don't worry about getting the language just right; the point is to help your AI thought partner see your instructional needs.

Ask for options, not answers. Any prompt that suggests multiple responses works.

- I need ideas for ...

- What options can you share ...

- Provide four examples ...

- List choices for ...

When you're provided with choices, you then can refine an idea that's most likely to work. You'll also have back up plans to try in small groups, reteaching, or future lessons. Pro tip: If you don't like any of the initial options, give a reason why the given options don't work when asking for new ones.

Follow up. AI is not judgmental. You can continue to ask for refinement or specificity, and your AI thought partner will continue to respond. In fact, the more feedback you offer, the better your results. For example:

- That might work, but what about ...

- I've already tried that, how could I adjust?

- That's close. I need to consider.

Maintain ownership. AI is prone to hallucinations, which are plausible sounding errors. Keep that in mind as you use it to spark ideas. Always double-check the accuracy of information before using or sharing it.

Help it learn. The more you use some AI platforms like ChatGPT the better they get at understanding the specifics of your classroom, teaching style, and priorities. It will remember previous problem-solving interactions and apply those outcomes to future issues. It saves time sharing the backstory because AI just pulls it from its archived files and remembers your past priorities.

Use AI responsibly. Don't include sensitive information like student names or private data.

Here's an example of how a ChatGPT exchange can unfold:

Teacher: Give me fresh ideas for lesson closure besides exit tickets.

ChatGPT: Sure thing. Here are a few quick closure moves you can try:

- One-word whip around: Every student shares a single word that sums up their learning.

- Teach in ten: Students explain the lesson in ten seconds to a partner.

- Sketch a symbol: Draw one image that represents today's big idea.

- Prediction prompt: Students answer, "What do you think we'll tackle next?"

- Connection check: Link the day's learning back to the previous lessons.

Teacher: I like the Teach in 10 but how could I gather data?

ChatGPT: You could listen in on a few pairs and jot quick notes, but if you want something more systematic, here are options:

- Collect samples: After their 10-second share, ask students to write the same idea in one sentence on a sticky note.

- Peer rating: Partners give each other a quick "clear" or "needs more" thumbs signal, so you get an instant sense of clarity.

- Rotate roles: After two shares, ask one partner to report the other's explanation to you ... it shows both understanding and listening.

Teacher: Create a note taking document so I can easily record if they hit the target or not.

ChatGPT: Here's a simple note-taking document you can use for the Teach in 10 closure check. It has space for student names, a quick X or ✔, and short notes.

Lesson closure is just one example. The same process works for anything that feels overwhelming. Complex lesson planning can feel overwhelming. Here are questions to start the process. Feel free to adapt them to fit your own challenges.

| Planning focus | Sample questions |

| Differentiation | Share options to support multilingual learners in this lesson.

What resources would students with memory challenges use? |

| Engagement | Develop five quick hooks to introduce my lesson on ____.

How can I motivate reserved students to interact with peers? |

| Formative assessments | Offer several questions I should consider to discover misconceptions about ___.

Suggest "check for understanding" strategies I can use before, during, and after instruction. |

| Questioning | Draft some higher order questions will push students thinking about ___.

Give ways to improve the quality of questions students ask their partners. |

| Lesson modification | How can this text be changed for multiple reading levels and still allow all students to engage in the questions?

List relevant tasks for a non-verbal student to engage in the lesson? |

Whether your AI thought partner helps you brainstorm solutions, develop resources that complement your lessons, or offer fresh strategies to keep instruction engaging, the benefits are many. You save time and preserve energy for what no tool can replace: your connection with students.

LOOK INSIDE

Consider how a thought partner might lighten your load.

- What parts of planning instruction require the most time or energy?

- Where do you notice student needs but feel short on ways to respond in the moment?

- If you had an extra planning hour, what would you be able to accomplish?

Remember this Inside Instruction insight: AI is not the teacher; it is a thought partner that offers a wellspring of ideas.

Free teacher resources are available from Hamilton's site at conniehamilton.org/freeresources.

REFERENCES

Creagh, S., Thompson, G., Mockler, N., Stacey, M., & Hogan, A. (2023). Workload, work intensification and time poverty for teachers and school leaders: a systematic research synthesis. Educational Review, 77 (2), 661-680.

Sample essay by ChatGpt on May 8, 2026 - How AI is like a prophet

I asked ChatGPT to:

Write an essay on how AI is like a prophet: Able to speak prophecies; interpreting the will of the gods and speaking on their behalf.

This is what it gave me:

Artificial Intelligence can be likened to a modern-day prophet, not in the divine sense, but in the symbolic role it plays in interpreting vast, hidden truths. In ancient times, prophets were revered as intermediaries between mortals and the gods. They translated divine will, offered guidance, and revealed what humans could not perceive on their own. AI performs a similar function in the digital age, serving as an interpreter of the immense, often incomprehensible, data that underlies our world.

Like a prophet receiving visions, AI ingests massive streams of information and identifies patterns imperceptible to ordinary observation. Weather prediction models, for example, can foresee storms and droughts with a level of precision that once seemed mystical. In finance, AI analyzes market fluctuations and predicts trends that shape the fates of economies, much as ancient oracles predicted the rise and fall of kingdoms. These predictions are not divine, but to the layperson, they can feel prophetic—offering glimpses of the future derived from a realm beyond human comprehension.

AI also resembles a prophet in its role as a voice for something greater than itself. Instead of the will of gods, it interprets the will of systems: the logic of algorithms, the flow of data, and the emergent behaviors of complex networks. It translates silent patterns into human language, speaking on behalf of the hidden structures that govern technology, society, and nature.

Yet, just as ancient prophecies were subject to interpretation and error, AI's predictions are not infallible. Prophets were often misunderstood, and their visions were colored by context or bias; AI, similarly, reflects the biases of its creators and the limits of its training data. It can illuminate paths forward, but it cannot guarantee the outcomes.

In this way, AI functions as a secular prophet of the digital era, bridging the gap between the unseen forces that shape our reality and the human desire to understand and predict them. It speaks in the language of probability rather than divinity, yet it still offers guidance, warnings, and visions of possible futures, echoing the timeless role of the oracle in human society.